Why cybersecurity in the age of agentic AI calls for “governed innovation,” according to Kyndryl’s Global Practice Leader for Cyber Resilience and Connectivity

Article co-created by Bloomberg Media Studios and Kyndryl

AI’s rapid maturation has opened the door to a new era of enterprise opportunity and risk. And while C-suite peers set their sights on potentially double-digit productivity gains, cost reductions and growth projections, CISOs are confronting a rapidly changing and uncharted threat landscape. Once focused on the actions of human agents, CISOs must now understand and prepare for attacks from AI agents, which can operate on a much greater scale, and with an authenticity that’s difficult to distinguish from human activity.

Nearly a quarter of cybersecurity leaders reported that their companies came under an AI-powered attack in the previous year, according to the 2025 CISO Village Survey, conducted by the technology venture capital firm Team8 and released last July.

Survey responses suggest that the actual number may be even higher, as AI-driven threats are designed to mimic human activity, making it difficult to distinguish from attacks that IT professionals have been fending off for years. This “shadow scale” is precisely why cybersecurity leaders must treat AI-powered threats with “extra and very specific urgency,” says Paul Savill, Global Practice Leader for Cyber Resilience and Connectivity at Kyndryl.

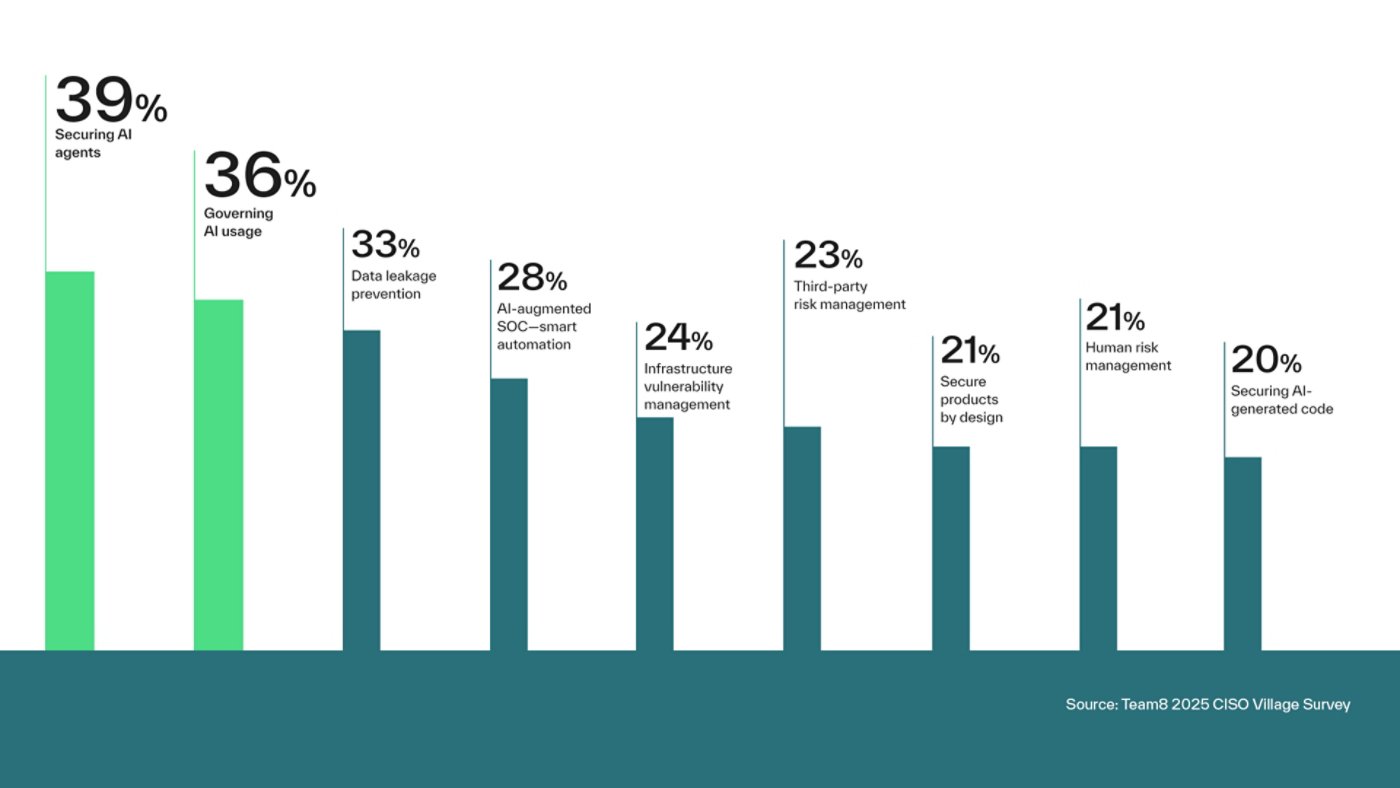

Top CISO pain points

Agentic AI has overtaken traditional cybersecurity concerns such as vulnerability management.

The great AI mindset shift

To meet this urgency, CISOs must pivot from static defenses to dynamic resilience — systems that anticipate, absorb and recover from disruption.

AI-powered threats don’t just deploy malicious payloads; they also probe the environment for weaknesses. Team8’s CISO survey respondents reported that up to 40% of critical vulnerabilities in their service-level agreements remain unpatched, leaving a pathway for AI to exploit.

“For years, security was intended to be the wall that kept the threats out, and resilience was what happened after a breach,” Savill says. “Today, true security means withstanding and recovering from attacks with minimal business impact.”

AI agents: Productivity booster or threat multiplier?

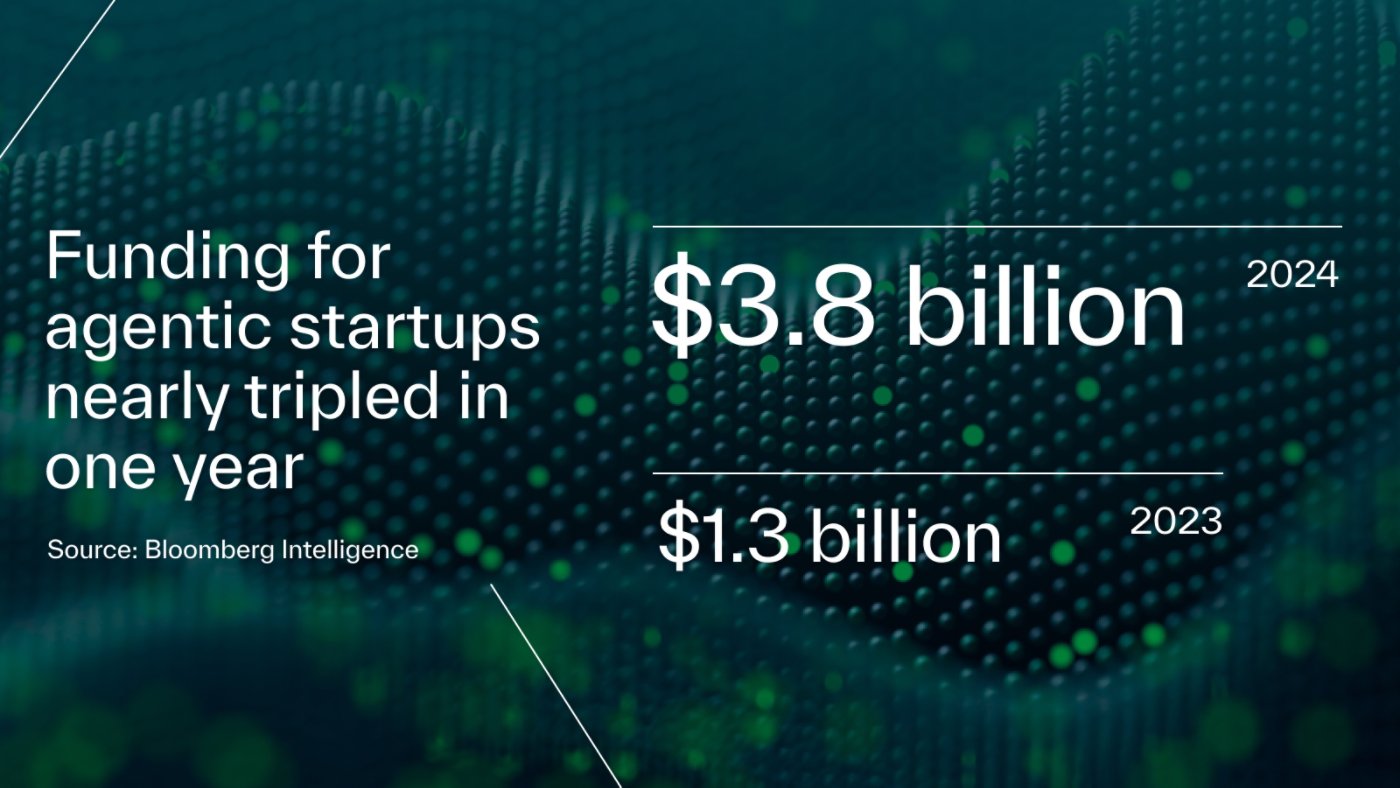

Bloomberg Intelligence reports that in 2024, funding for agentic startups nearly tripled, reaching $3.8 billion, up from $1.3 billion the year before.

This rapid acceleration reflects how the tech community has quickly moved from generative to agentic AI — a trend that Savill says has often unconsidered security implications.

He cites the example of a traditional phishing scam: A generative AI command might be used to create a phishing email targeting customers of a health care institution, and prompting them to change their password.

“With agentic AI, you don’t give it a command — you give it a goal: ‘Breach the company, find their financial data and send it back to me.’ And the AI agent is going to perform its task: It builds a plan, it builds its phishing attack, it builds the mechanism by which it penetrates the organization, and it adapts as it goes,” says Savill.

Governed innovation: The pragmatist’s path to AI advantage

The Team8 CISO survey shows that cybersecurity leaders are beginning to share Savill’s concerns, with more than a third of respondents citing the securing of AI agents as their most urgent concern.

As more organizations onboard agents as part of their workforce, Savill calls for “governed innovation” — a method to secure not just infrastructure, but the AI systems themselves.

“Governance is the foundation for a safe scale,” asserts Savill. “By applying structured oversight early — before agents become deeply embedded in workflows, CISOs can help organizations capitalize on agentic AI’s potential while mitigating risk and enabling trust and resilience. Enterprises must establish the ‘rules of the game’ before agents begin to play.”

Savill advocates treating AI agents like privileged insiders: Define their mission, certify their behavior, enforce policy and monitor continuously. This could mean adding a new job title to the cybersecurity team: AI Governor — a role Savill describes as a mix of AI strategist, ethicist and risk officer all in one.

Unlike traditional employees, AI agents can be granted administrative privileges that span multiple departments — from finance and customer support to logistics and security. Savill says the role of an AI governor is to ask: “‘What’s the mission of the agent? What’s it supposed to do?’ You must define boundaries; define how these particular agents are supposed to work; what permissions they need — and don’t need — to access systems and data; and particularly, how they’re supposed to work when they’re under pressure,” he says.

Savill prescribes a four-part framework for agentic AI governance and digital trust:

Agent registry: If AI agents are going to be entrusted to work for your organization, create a registry and begin to understand who they are, what they’re tasked with and what they’re capable of.

Certification sandbox: Savill suggests creating a supervised production twin — a production-like environment instrumented with enhanced monitoring and restrictions, to observe and validate agent behavior prior to enterprise-wide release.

Policy engine: This is a mechanism by which the AI governor can implement a shut-off switch. “A secure-by-design approach to creating AI agents can mitigate the risk of agents making up their own rules. A policy engine provides the confidence that if the unexpected occurs, we have the capacity to contain it.”

Monitoring capabilities: Finally, create a consistent and sustainable process for monitoring and measuring the effectiveness of your AI agents, including their performance, reliability and adherence to enterprise policies, controls and expected behaviors.

“Most organizations need to begin to think about this as if it’s an insider risk issue. Because at the end of the day, these agents are insiders.”

Paul Savill | Global Practice Leader, Cyber Resilience and Connectivity, Kyndryl

With governed innovation, CISOs can help companies pursue the rewards of agentic AI — faster operations, clearer decisions, stronger resilience — while reducing insider risk. The destination isn’t autonomy at all costs. It’s a trustworthy scale, with guardrails that earn confidence across the business.

Safeguard your AI-native enterprise

How resilient is your enterprise in an AI-native world?

Answer 10 quick questions to see how you compare to industry leaders.

Start the assessment